The Good Stuff by Qiaochu Y. '12

yeah, man

As a freshman, I made the conscious decision not to live where I knew a lot of other math majors would be (Random). I figured I would have the rest of my life to meet other math people, and I really wanted to use college as an opportunity to expand my horizons and meet other kinds of people. I've definitely succeeded in that goal, both at Senior Haus (where I lived as a freshman) and at Theta Xi (where I live now). As a side effect, though, I never really became involved with the math community at MIT, and I don't often talk to other MIT students about the stuff I'm studying.

A lot of people, even at MIT, don't really like math. The story I hear too often is that they loved math up to a certain point, then got a terrible math teacher, then it stopped making sense to them and they hated it after that. It's a sad story. Math very much builds on itself, and if you miss a vital piece of foundation, then your math is going to be fragile and prone to collapse. You can probably take literature classes in college without taking literature classes in high school, but good luck trying to take math classes in college without taking math classes in high school.

The saddest part, though, is that most people never get to the good stuff! Most of what gets taught in grade school doesn't really deserve to be called "math." It's really closer to what mathematician John Allen Paulos calls numeracy. It's important to distinguish mathematics from numeracy in the same way that it's important to distinguish literature from literacy. Literature is art; literacy is a basic skill. And even the stuff that isn't numeracy – trigonometry, for example – is absurdly old. Haven't you ever wondered what mathematicians have been up to since then?

I think the good stuff is beautiful – some of the most beautiful stuff in human history – and I want more people to at least know what it looks like. So I'd like to give some non-technical descriptions of the courses I took last fall at the University of Cambridge through CME. (I'd describe the courses I'm taking now, but most of them are graduation requirements.) It's a little harder to do this for math classes, especially purer math classes, than other classes because I can't just give short, easily-understandable descriptions like

2.665: Build robots.

2.666: Build robots that shoot lasers.

I have to explain at least roughly what some abstract concept is, and also why studying it is interesting. I haven't really tried to do this before, but I might as well start now, right? So let's see how I do. Feel free to ask in the comments for clarification!

Galois Theory

The quadratic formula tells you how to find the roots of a quadratic polynomial in terms of square roots. There's also a cubic formula for finding the roots of a cubic polynomial in terms of square roots and cube roots, although it's huge and impractical to use. There's even a quartic formula, which is even huger and more impractical. You might expect, based on this pattern, that there's a quintic formula that takes pages and pages to write down.

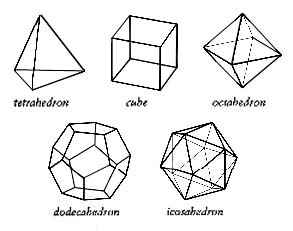

But something much more interesting is true: there is no quintic formula! I bet you're wondering why. The modern explanation, in terms of Galois theory, goes something like this: the roots of a polynomial are not as different from each other as they seem. In fact, in certain situations you can swap around some of them, and it doesn't really matter. In other words, the roots have certain symmetries, which are mathematically described using the notion of a group. Rather than try to explain what this means, I'll give some examples: think of the rotational and reflectional symmetries of a regular polygon, or of a Platonic solid.

Galois discovered an amazing relationship between these symmetries and writing down generalizations of the quadratic formula. It turns out that our ability to write down generalizations of the quadratic formula for a given polynomial depends on how complicated the symmetries of its roots are. For quadratic polynomials, the only interesting case is where you can swap the two roots, which is a very simple symmetry. For cubic polynomials, you can either cyclically permute the three roots, or you can in addition swap two of them: think of the rotational (then reflectional) symmetries of a triangle. For quartic polynomials, there are a few more possibilities: think of the rotational (then reflectional) symmetries of a square, then of a rectangle, then of a tetrahedron. It turns out that none of these are particularly complicated in the sense above, which is why we can write down quadratic, cubic, and quartic formulas.

For quintic polynomials, it can happen that the symmetries are too complicated: they can look like the rotational and reflectional symmetries of an icosahedron! And this turns out to be too complicated to allow for a quintic formula to exist.

Galois theory is related at least by analogy to a wide swath of modern mathematics, and in particular complicated descendants of Galois theory were fundamental to Wiles' proof of Fermat's Last Theorem.

Rough MIT equivalent: Studied in 18.702.

Graph Theory

Put six people into a room. Then either three of them will all be friends with each other or three of them will all be strangers. A sociologist once observed this and thought he might have made some deep sociological discovery, but he consulted some mathematicians first and learned that what he had observed instead was pure mathematical fact: what I just said is true regardless of which people are friends with which other people!

The relevant structure here is that of a graph, a collection of nodes connected by edges. Above, the nodes are the six people and the edges indicate who is friends with who. Another example of significant practical importance is the graph whose nodes are all websites on the internet and where an edge between two nodes means one links to the other. A surprising number of questions in mathematics can be phrased as questions about graphs, and there are all sorts of interesting questions you can ask about them that turn out to have interesting answers. Algorithms that deal with graphs are also extremely important in computer science and have many applications, both practical and theoretical.

Rough MIT equivalent: Studied in 18.304 and 6.042.

Linear Analysis

More commonly known as functional analysis, linear analysis is roughly speaking the study of infinite-dimensional vectors and matrices. The study of many interesting differential equations can be phrased as the study of properties of certain infinite-dimensional matrices, and differential equations are a powerful tool in both pure and applied mathematics, so functional analysis finds applications everywhere.

Rough MIT equivalent: Somewhere between 18.100B and 18.102.

Logic and Set Theory

It's difficult to explain what the point of this class is without explaining something called the foundational crisis in mathematics. Here is a very rough summary of what happened: mathematicians discovered that certain naive ways of constructing mathematical objects led to logical contradictions. To explain the kind of problem that mathematicians ran into, let me use the Grelling-Nelson paradox, which goes like this: some adjectives have the funny property that they don't describe themselves. For example, "monosyllabic" doesn't describe itself because it is polysyllabic. Let's say that such words are heterological.

Is "heterological" a heterological word?

If it is, then it doesn't describe itself, so it isn't. But if it isn't, then it describes itself, so it is! The mathematical version of this, called Russell's paradox, allows you to write down a mathematical object which both does and doesn't have a certain property. This is a contradiction, which is bad news; if you allow yourself a contradiction, you can prove anything. I had fun doing this in middle school by writing down proofs of statements like "Mr. Black [my teacher] is a carrot" starting from a "proof" that 0 = 1.

Anyway, this and other developments convinced mathematicians that they were being too naive about how they constructed mathematical objects, so they tried to write down rules that would allow them to construct all the objects they wanted without leading to contradictions. These rules are, roughly speaking, the subject of set theory. The study of how rules like the rules of set theory work is the subject of logic.

Rough MIT equivalent: 18.510.

Probability and Measure

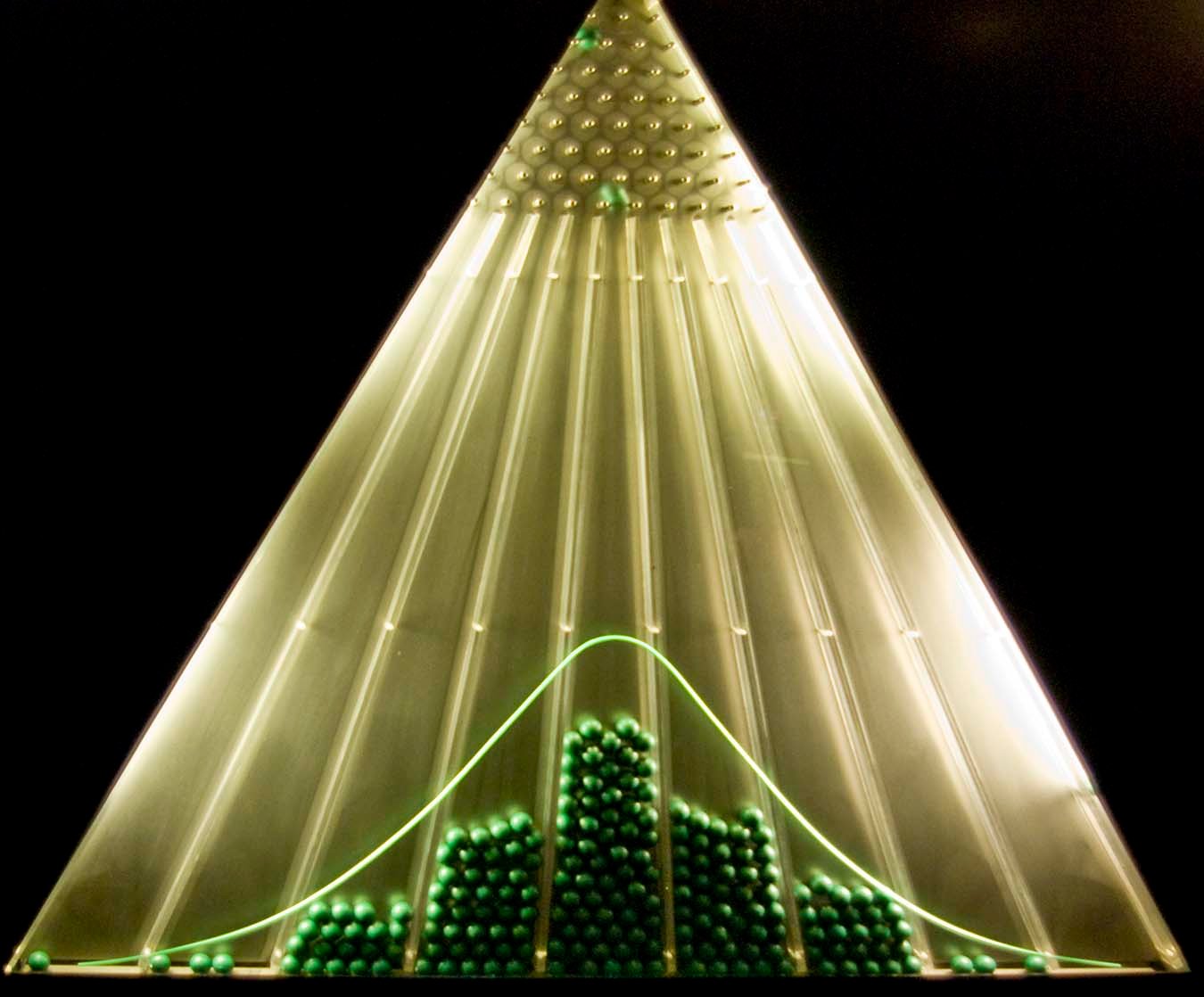

Flip a coin a bunch of times. Approximately what proportion of them will be heads? About half, okay, but how does the deviation from exactly half behave? As it turns out, the deviation from exactly half looks like a Bell curve: mathematicians say it is (approximately) normally distributed, and gets more so the more coins you flip.

But this isn't just a fact about coins; analogous statements are true if you replace coins by dice or by even more complicated random phenomena. This fundamental result, known as the central limit theorem, explains at least heuristically why Bell curves appear in nature: many (but by no means all!) natural phenomena occur due to the accumulation of a large number of independent, essentially random phenomena, such as properties of organisms controlled by a large number of genes.

The central limit theorem, as a mathematical fact, also has applications in mathematics; however, in mathematics, the random phenomena that need to be considered are very general. To handle them, mathematicians invented measure theory, the general study of "measures" (such as volumes, but also such as probabilities). This is a somewhat technical subject, but a very valuable tool: it gives us, among other things, a very flexible notion of integration.

Rough MIT equivalent: 18.125, with some material from 18.440/18.175.

It doesn’t sound any weirder to me than publicizing theoretical physics. I contend that there are plenty of people who would be interested in mathematics (as distinguished from numeracy) except that they had a terrible teacher too early to get exposed to the good stuff, and I’m hoping I can find and convert some of those people by popularizing.

I bet you definitely do a great job, if there are these people. This post is amazing!

This post is amazing!

Hehe. I really loved math in high school, but 18.01/18.02/18.03 were just not my style and turned me off to math. Now that I’m taking 6.042J (aka 18.062J), which is stylized more after a “real math class”, I absolutely *love* it. Completely unexpected O.o

Interesting, just the other day I was having a conversation with a freshman about people being turned away from math without being exposed to real math.

(This came up because I think I’m not particularly interested in math after being exposed to real math. Some theoretical results are really pretty, but the vast majority of it is difficult for me to care about because it’s just so abstract. Put another way, I do like to think about math; I don’t want to think about math all my life.)

Parenthesized comment said, I do support publicizing math as opposed to numeracy, because it is beautiful. Kudos.

@Anthony: I’m curious what you specifically have in mind when you talk about things that are difficult to care about because it’s so abstract. Abstraction is in the eye of the beholder and I think a lot can be done to make the abstract seem more concrete just by knowing the right examples.

1st math post here? Fascinating, Qiaochu! But publicizing math (instead of numeracy) sounds a little weird…

Not to discourage you or anything. I was just assuming those who would be interested in good stuff would nonetheless go to those stuff.

this is a super awesome and informative entry! thank you for giving us an idea of some of the most intricate, yet beautiful mathematical concepts

i would like to ask for some help, if I may. I’m a freshman at Mount Holyoke College and would like to get a transfer to MIT as a math major. I’d be obliged if you could send me your email address at [email protected]. i really need your guidance.

I don’t know if “abstract” is the right word. I’m trying to find another way to express what I mean.

To take an example from PROMYS two summers ago (which I immensely enjoyed, for the record), continued fractions. They’re really cool, and they have a few good uses, and you could probably research their various properties indefinitely, but I wouldn’t want to do so for the rest of my life because at one point it seems like all further results would become this self-contained bubble with no wider implications elsewhere.

Is that acceptable?

@Anthony: I see where you’re coming from, but being interested in continued fractions doesn’t entail ignoring the rest of mathematics. Continued fractions are related to a lot of interesting stuff, and I personally am a big fan of continued fractions where the entries are polynomials instead of integers (you get generating functions describing certain types of trees). Mathematics is highly connected, and you’d be surprised what ends up having wider implications for what.

@Charles: sure. It’s not mine; I’m pretty sure I read it in Paulos’ book.

Hey Qiaochu, what’s your process for reading math textbooks? I’ve been trying to teach myself some stuff outside of class, but I find that after I’m done I don’t really know anything new. In addition, I’ve been writing down every theorem/proof, and getting really bogged down in details. Plus the authors skip a ton of non-trivial steps (at least in grad level books). Because of this I have no inclination to do exercises — once I’ve finally slogged through a chapter I just want to move on. Do you have any suggestions?

Thanks!

My process is to not just read textbooks. Expository papers can provide a lot of valuable perspective that textbooks don’t, as can blog posts, threads on MathOverflow or math.StackExchange, etc. It’s also valuable to look for multiple textbooks on a topic, especially at different levels. If grad textbooks are too dry for you, try undergrad, and don’t limit yourself to just Springer.

Part of how I learn things is also by blogging about them. This is valuable for several reasons: blogging forces me to solidify my ideas, it often exposes connections I didn’t know were there before I started blogging, and blogging my way through a proof makes it much more likely I’ll remember it (especially if I’ve forgotten parts of the proof and am filling them back in on my own).

Damn, I like that literacy/numeracy analogy. Can I steal it in perpetuity?

Finally I come across someone who thinks that numbers alone don’t comprise entire field of maths!

Honestly I am not good at calculations and my friends think I am dumb in maths. But I love theoretical part of maths calculus, functions etc. I find them so much more interesting.. I have tried so many times to tell the people around me that maths is more than numbers and boring calculations, that it is theoretical and awesome when you understand it.. but to no avail sadly.. (anyways who cares about them!!)

Even I learn somewhat the same ways you do. Mine is much weirder and if people see it, they would be convinced without a doubt that I am mental asylum escapee!! Instead of blogging mine involves imagining that I am giving a lecture. So I speak about things that I have learned and at the same time I keep learning more when I speak more!!